Parallel Multiple Viewpoint Rendering

Goal: Find an efficient solution for rendering multiple viewpoints in a PC cluster.

Motivation:

Most of the visualization systems assumes that the scene is being observed from a single viewpoint. Even if this is the majority of the cases, some applications requires the rendering of multiple viewpoints of the same scene. In a engineering setting we may imagine different teams working remotely with 3D data from a central repository. Other possible application are the multiplayer massive games, where many players share simultaneously the same environment. The generation of multiple viewpoints may be also used to create three dimensional perception in holographic or auto-stereoscopic displays.

To render multiple views is a difficult problem, since the choice of the point of view is one of the first steps performed on a traditional rendering algorithm. When a multiple views are required, i.g. to render a stereo pair, all the process is performed for each point of view. Some algorithms try to exploit the similarity between two pairs to reduce the computational cost. However, the change in viewpoint may often introduces several changes on the image that are not easily predicted. Changes can occur on illumination, occlusion or in the level of detail of the objects.

Approach:

My master thesis idea was to try to use samples already computed when possible, so that they would only be computed once. The reconstruction of images from others, instead of using the original primitives, is called image based rendering. The architecture was developed to PC clusters with graphics cards to handle the rendering requests and used a lightfield generator to synthesize the output images. A lightfield or lumigraph is a technique that enables the rendering of new viewpoints based on a description of the light flux in the scene.

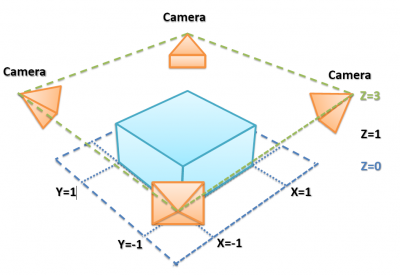

1-The scene is sampled by many cameras

Motivation:

Most of the visualization systems assumes that the scene is being observed from a single viewpoint. Even if this is the majority of the cases, some applications requires the rendering of multiple viewpoints of the same scene. In a engineering setting we may imagine different teams working remotely with 3D data from a central repository. Other possible application are the multiplayer massive games, where many players share simultaneously the same environment. The generation of multiple viewpoints may be also used to create three dimensional perception in holographic or auto-stereoscopic displays.

To render multiple views is a difficult problem, since the choice of the point of view is one of the first steps performed on a traditional rendering algorithm. When a multiple views are required, i.g. to render a stereo pair, all the process is performed for each point of view. Some algorithms try to exploit the similarity between two pairs to reduce the computational cost. However, the change in viewpoint may often introduces several changes on the image that are not easily predicted. Changes can occur on illumination, occlusion or in the level of detail of the objects.

Approach:

My master thesis idea was to try to use samples already computed when possible, so that they would only be computed once. The reconstruction of images from others, instead of using the original primitives, is called image based rendering. The architecture was developed to PC clusters with graphics cards to handle the rendering requests and used a lightfield generator to synthesize the output images. A lightfield or lumigraph is a technique that enables the rendering of new viewpoints based on a description of the light flux in the scene.

1-The scene is sampled by many cameras

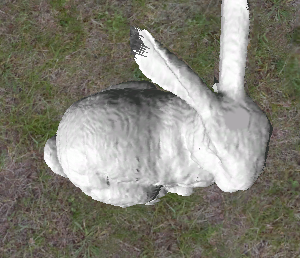

2-A new view point is synthesized from the samples

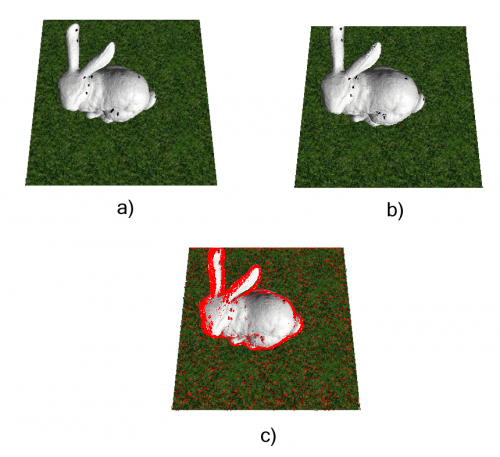

3-The output is compared with a render from the original data

This work was published on the XXI Brazilian Symposium on Computer Graphics and Image Processing and on

- Performance Analysis of a Parallel Multi-view Rendering Architecture Using Light Fields

Lages, W. S.; Cordeiro, C. S.; Guedes, D. The Visual Computer, October #25_10, Springer Berlin / Heidelberg - LAGES, W.; CORDEIRO, C.; GUEDES, D. A Parallel Multi-View Rendering Architecture. In: SIBGRAPI-2008: XXI Brazilian Symposium on Computer Graphics and Image Processing, 2008, Campo Grande, Brasil.

- LAGES, W.; Uma Arquitetura Paralela para a Renderização de Múltiplos Pontos de Vista. Dissertação de Mestrado. DCC-UFMG 2008.